Real-Time Data Streaming with Apache Kafka: A Practical Introduction

Batch processing served us well for decades, but modern businesses need data that’s fresh — not hours or days old. Apache Kafka has become the backbone of real-time data architectures.

What Kafka Does

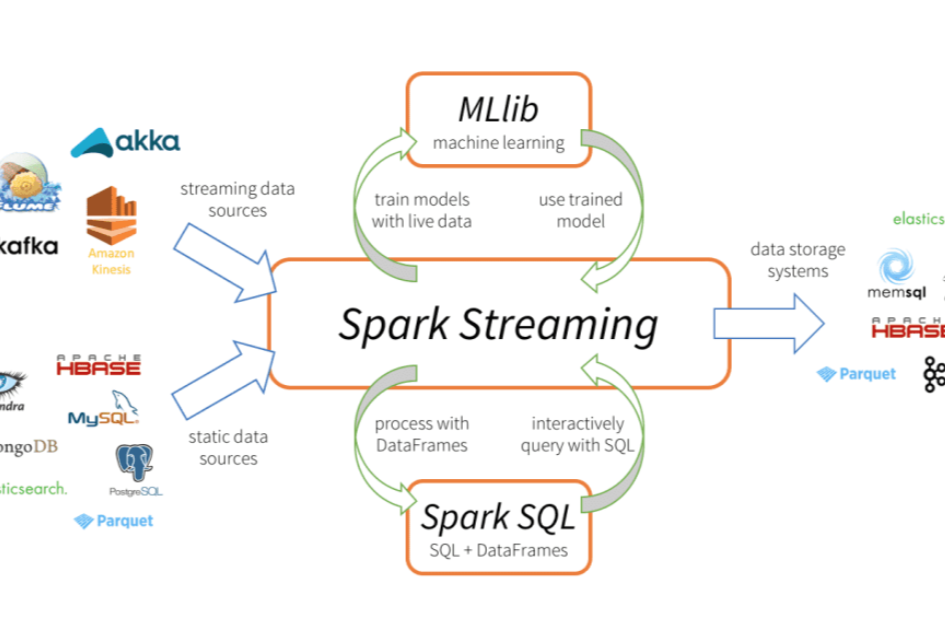

Kafka is a distributed event streaming platform. Think of it as a high-throughput, fault-tolerant message bus that can handle millions of events per second. Producers publish events (a user clicked, an order was placed, a sensor reading changed) and consumers process them in real-time.

Common Use Cases

Real-time dashboards, fraud detection, inventory synchronization, personalization engines, and event-driven microservices all rely on Kafka. If your business decision depends on data that’s more than a few seconds old, Kafka can help.

Kafka vs Alternatives

Amazon Kinesis and Google Pub/Sub offer managed alternatives with lower operational overhead. For most mid-size deployments, Confluent Cloud (managed Kafka) provides the best balance of Kafka’s power with cloud-managed simplicity.

Not every use case needs real-time processing. If your reports are consumed once daily, batch is perfectly fine. But when latency matters — when a delayed fraud alert means lost revenue — streaming is worth the investment.